[ad_1]

When you’ve all the time been in awe of oldsters utilizing the Google Search Console API to do cool issues, this text is an effective learn for you.

You need to use BigQuery with the GSC bulk knowledge export to get among the similar advantages with out requiring the assistance of a developer.

With BigQuery, you possibly can effectively analyze massive volumes of information from the GSC bulk knowledge export.

You received’t have real-time knowledge retrieval; that’s obtainable with the API in our situation however you possibly can depend on each day knowledge imports which implies that you’re working with up-to-date info.

By leveraging BigQuery and the GSC bulk knowledge export, you possibly can entry complete search analytics knowledge – that’s the half you hear everybody raving about on LinkedIn.

In line with Gus Pelogia, search engine optimisation product supervisor at Certainly:

“It’s such a sport changer and a fantastic alternative to study SQL. We are able to lastly bypass GSC and exterior search engine optimisation instruments limitations. I used to be stunned to see how easy it was to retrieve knowledge.”

A Structured Method To Utilizing BigQuery And Google Search Console (GSC) Information For Content material Efficiency Evaluation

The intention of this text is to not give you an extended record of queries or a large step-by-step blueprint of conduct probably the most intense audit of all time.

I intention to make you are feeling extra comfy entering into the groove of analyzing knowledge with out the restrictions that include the Google Search Console interface. To do that, you could think about 5 steps:

Determine use circumstances.

Determine related metrics for every use case.

Question the info.

Create a looker studio report to assist stakeholders and groups perceive your evaluation.

Automate reporting.

The problem we regularly face when getting began with BigQuery is that all of us need to question the info straight away. However that’s not sufficient.

The true worth you possibly can deliver is by having a structured method to your knowledge evaluation.

1. Determine Use Instances

It’s typically really helpful that you already know your knowledge earlier than you determine what you need to analyze. Whereas that is true, on this case, it will likely be limiting you.

We suggest you begin by figuring out the particular goal and objectives for analyzing content material efficiency.

Use Case #1: Determine The Queries And Pages That Carry The Most Clicks

“I imagine that each high-quality search engine optimisation audit also needs to analyze the positioning’s visibility and efficiency in search. When you establish these areas, you’ll know what to concentrate on in your audit suggestions.”

Stated Olga Zarr in her “How you can audit a website with Google Search Console” information.

To do this, you need the queries and the pages that deliver probably the most clicks.

Use Case #2: Calculating UQC

If you wish to spot weak areas or alternatives, calculating the Distinctive Question Rely (UQC) per web page affords useful insights.

You already know this since you use any such evaluation in search engine optimisation instruments like Semrush, SE Rating, Dragon Metrics, or Serpstat (the latter has a fantastic information on How you can Use Google Search Console to Create Content material Plans).

Nevertheless, it’s extremely helpful to recreate this with your individual Google Search Console knowledge. You’ll be able to automate and replicate the method frequently.

There are advantages to this:

It helps establish which pages are attracting a various vary of search queries and which of them could also be extra targeted on particular matters.

Pages with a excessive UQC might current alternatives for additional optimization or enlargement to capitalize on a wider vary of search queries.

Analyzing the UQC per web page may reveal which place bands (e.g., positions 1-3, 4-10, and many others.) show extra variability when it comes to the variety of distinctive queries. This may help prioritize optimization efforts.

Understanding how UQC fluctuates all year long can inform content material planning and optimization methods to align with seasonal developments and capitalize on peak intervals of search exercise.

Evaluating UQC developments throughout completely different time intervals allows you to gauge the effectiveness of content material optimization efforts and establish areas for additional enchancment.

Use case #3: Assessing The Content material Danger

Jess Joyce, B2B & SaaS search engine optimisation professional has a income producing content material optimization framework she shares with purchasers.

One of many important steps is discovering pages that noticed a decline in clicks and impressions quarter over quarter. She depends on Search Console knowledge to take action.

Constructing this question could be nice however earlier than we soar into this, we have to assess the content material threat.

When you calculate the share of complete clicks contributed by the highest 1% of pages on an internet site primarily based on the variety of clicks every web page receives, you possibly can shortly pinpoint in case you are within the hazard zone – that means if there are potential dangers related to over-reliance on a small subset of pages.

Right here’s why this issues:

Over-reliance on a small subset of pages may be dangerous because it reduces the diversification of site visitors throughout the web site, making it susceptible to fluctuations or declines in site visitors to these particular pages.

Assessing the hazard zone: A proportion worth over 40% signifies a excessive reliance on the highest 1% of pages for natural site visitors, suggesting a possible threat.

This question offers useful perception into the distribution of natural site visitors throughout an internet site.

2. Determine Related Metrics

Analyzing your content material allows you to discern which content material is efficient and which isn’t, empowering you to make data-informed choices.

Whether or not it’s increasing or discontinuing sure content material varieties, leveraging insights out of your knowledge allows you to tailor your content material technique to match your viewers’s preferences.

Metrics and evaluation in content material advertising and marketing present the important knowledge for crafting content material that resonates together with your viewers.

Use Case #1: Determine The Queries And Pages That Carry The Most Clicks

For this use case, you want some fairly easy knowledge.

Let’s record all of it out right here:

URLs and/or queries.

Clicks.

Impressions.

Search kind: we solely need net searches, not photographs or different varieties.

Over a selected time interval.

The subsequent step is to find out which desk you need to get this info from. Keep in mind, as we mentioned beforehand, you have got:

searchdata_site_impression: Incorporates efficiency knowledge to your property aggregated by property.

searchdata_url_impression: Incorporates efficiency knowledge to your property aggregated by URL.

On this case, you want the efficiency knowledge aggregated by URL, so this implies utilizing the searchdata_url_impression desk.

Use Case #2: Calculating UQC

For this use case, we have to record what we want as properly:

URL: We need to calculate UQC per web page.

Question: We would like the queries related to every URL.

Search Kind: We solely need net searches, not photographs or different varieties.

We nonetheless want to select a desk, on this case, you want the efficiency knowledge aggregated by URL so this implies utilizing the searchdata_url_impression desk.

Use Case #3: Assessing The Content material Danger

To calculate the “clicks contribution of prime 1% pages by clicks,” you want the next metrics:

URL: Used to calculate the clicks contribution.

Clicks: The variety of clicks every URL has acquired.

Search Kind: Signifies the kind of search, usually ‘WEB’ for net searches.

We nonetheless want to select a desk, on this case, you want the efficiency knowledge aggregated by URL so this implies utilizing the searchdata_url_impression desk. (Narrator voice: discover a pattern? We’re working towards with one desk which allows you to get very conversant in it.)

3. Question The Information

Use Case #1: Determine The Queries And Pages That Carry The Most Clicks

Let’s tie all of it collectively to create a question, we could?

You need to see pages with probably the most clicks and impressions. This can be a easy code that you would be able to get from Marco Giordano’s BigQuery handbook obtainable by way of his e-newsletter.

Now we have barely modified it to go well with our wants and to make sure you hold prices low.

Copy this question to get the pages with probably the most clicks and impressions:

SELECT url, SUM(clicks) as total_clicks, SUM(impressions) as total_impressions FROM `pragm-ga4.searchconsole.searchdata_url_impression`

WHERE search_type=”WEB” and url NOT LIKE ‘%#%’

AND data_date = “2024-02-13″

GROUP BY url

ORDER BY total_clicks DESC;

It depends on one of the vital frequent SQL patterns. It allows you to group by a variable, in our case, URLs. After which, you possibly can choose aggregated metrics you need.

In our case, we specified impressions and clicks so we can be summing up clicks and impressions (two columns).

Let’s break down the question Marco shared:

SELECT assertion

SELECT url, SUM(clicks) as total_clicks, SUM(impressions) as total_impressions: Specifies the columns to be retrieved within the end result set.

url: Represents the URL of the webpage.

SUM(clicks) as total_clicks: Calculates the overall variety of clicks for every URL and assigns it an alias total_clicks.

SUM(impressions) as total_impressions: Calculates the overall variety of impressions for every URL and assigns it an alias total_impressions.

FROM clause

FROM table_name`pragm-ga4.searchconsole.searchdata_url_impression`: Specifies the desk from which to retrieve the info.

table_name: Represents the identify of the desk containing the related knowledge.

Vital to know: substitute our desk identify together with your desk identify.

WHERE clause

WHERE search_type = ‘WEB’ and url NOT LIKE ‘%#%’: Filters the info primarily based on particular circumstances.

search_type = ‘WEB’: Ensures that solely knowledge associated to net search outcomes is included.

url NOT LIKE ‘%#%’: Excludes URLs containing “#” of their tackle, filtering out anchor hyperlinks inside pages.

data_date = “2024-02-13”: This situation filters the info to solely embody information for the date ‘2024-02-13’. It ensures that the evaluation focuses solely on knowledge collected on this particular date, permitting for a extra granular examination of net exercise for that day.

(Narrator voice: we suggest you choose a date to maintain prices low.)

Vital to know: We suggest you choose two days earlier than at this time’s date to make sure that you have got knowledge obtainable.

GROUP BY clause

GROUP BY url: Teams the outcomes by the URL column.

This teams the info in order that the SUM perform calculates complete clicks and impressions for every distinctive URL.

ORDER BY clause

ORDER BY total_clicks DESC: Specifies the ordering of the end result set primarily based on the total_clicks column in descending order.

This arranges the URLs within the end result set primarily based on the overall variety of clicks, with the URL having the best variety of clicks showing first.

This question remains to be extra superior than most newcomers would create as a result of it not solely retrieves knowledge from the proper desk but in addition filters it primarily based on particular circumstances (eradicating anchor hyperlinks and search varieties that aren’t completely WEB).

After that, it calculates the overall variety of clicks and impressions for every URL, teams the outcomes by URL, and orders them primarily based on the overall variety of clicks in descending order.

This is the reason you need to begin by your use case first, determining metrics second after which writing the question.

Copy this SQL to get the queries in GSC with probably the most clicks and impressions:

SELECT question, SUM(clicks) as total_clicks, SUM(impressions) as total_impressions FROM `pragm-ga4.searchconsole.searchdata_url_impression`

WHERE search_type=”WEB”

AND data_date = “2024-02-13″

GROUP BY question

ORDER BY total_clicks DESC;

This is identical question, however as an alternative of getting the URL right here, we’ll retrieve the question and mixture the info primarily based on this discipline. You’ll be able to see that within the GROUP BY question portion.

The issue with this question is that you’re more likely to have a variety of “null” outcomes. These are anonymized queries. You’ll be able to take away these through the use of this question:

SELECT question, SUM(clicks) as total_clicks, SUM(impressions) as total_impressions FROM `pragm-ga4.searchconsole.searchdata_url_impression`

WHERE search_type=”WEB”

AND is_anonymized_query = false

AND data_date = “2024-02-13″

GROUP BY Question

ORDER BY total_clicks DESC;

Now, let’s go one step additional. I like how Iky Tai, search engine optimisation at GlobalShares went about it on LinkedIn. First, you could outline what the question does: you possibly can see the high-performing URLs by clicks for a particular date vary.

The SQL question has to retrieve the info from the desired desk, filter it primarily based on a date vary, not a selected date, calculate the overall variety of impressions and clicks for every URL, group the outcomes by URL, and get them organized primarily based on the overall variety of clicks in descending order.

Now that that is accomplished, we will construct the SQL question:

SELECT

url,

SUM(impressions) AS impressions,

SUM(clicks) AS clicks

FROM

`pragm-ga4.searchconsole.searchdata_url_impression`

WHERE

data_date BETWEEN DATE_SUB(CURRENT_DATE(), INTERVAL 3 DAY) AND DATE_SUB(CURRENT_DATE(), INTERVAL 1 DAY)

GROUP BY

url

ORDER BY

clicks DESC;

Earlier than you copy-paste your method to glory, take the time to grasp how that is constructed:

SELECT assertion

SELECT url, SUM(impressions) AS impressions, SUM(clicks) AS clicks: Specifies the columns to be retrieved within the end result set.

url: Represents the URL of the webpage.

SUM(impressions) AS impressions: Calculates the overall variety of impressions for every URL.

SUM(clicks) AS clicks: Calculates the overall variety of clicks for every URL.

FROM clause

FROM searchconsole.searchdata_url_impression: Specifies the desk from which to retrieve the info.

(Narrator voice: You’ll have to substitute the identify of your desk.)

searchconsole.searchdata_url_impression: Represents the dataset and desk containing the search knowledge for particular person URLs.

WHERE clause

WHERE data_date BETWEEN DATE_SUB(CURRENT_DATE(), INTERVAL 3 DAY) AND DATE_SUB(CURRENT_DATE(), INTERVAL 1 DAY): Filters the info primarily based on the date vary.

data_date: Represents the date when the search knowledge was recorded.

BETWEEN: Specifies the date vary from three days in the past (INTERVAL 3 DAY) to yesterday (INTERVAL 1 DAY).

DATE_SUB(CURRENT_DATE(), INTERVAL 3 DAY): Calculates the date three days in the past from the present date.

DATE_SUB(CURRENT_DATE(), INTERVAL 1 DAY): Calculates yesterday’s date from the present date.

Vital to know: As we stated beforehand, it’s possible you’ll not have knowledge obtainable for the earlier two days. Which means that you might change that interval to say 5 and three days as an alternative of three and at some point.

GROUP BY clause

GROUP BY url: Teams the outcomes by the URL column.

This teams the info in order that the SUM perform calculates impressions and clicks for every distinctive URL.

ORDER BY clause

ORDER BY clicks DESC: Specifies the ordering of the end result set primarily based on the clicks column in descending order.

This arranges the URLs within the end result set primarily based on the overall variety of clicks, with the URL having the best variety of clicks showing first.

Vital word: when first getting began, I encourage you to make use of an LLM like Gemini or ChatGPT to assist break down queries into chunks you possibly can perceive.

Use Case #2: Calculating UQC

Right here is one other helpful Marco’s handbook that now we have modified with a view to get you seven days of information (per week’s value):

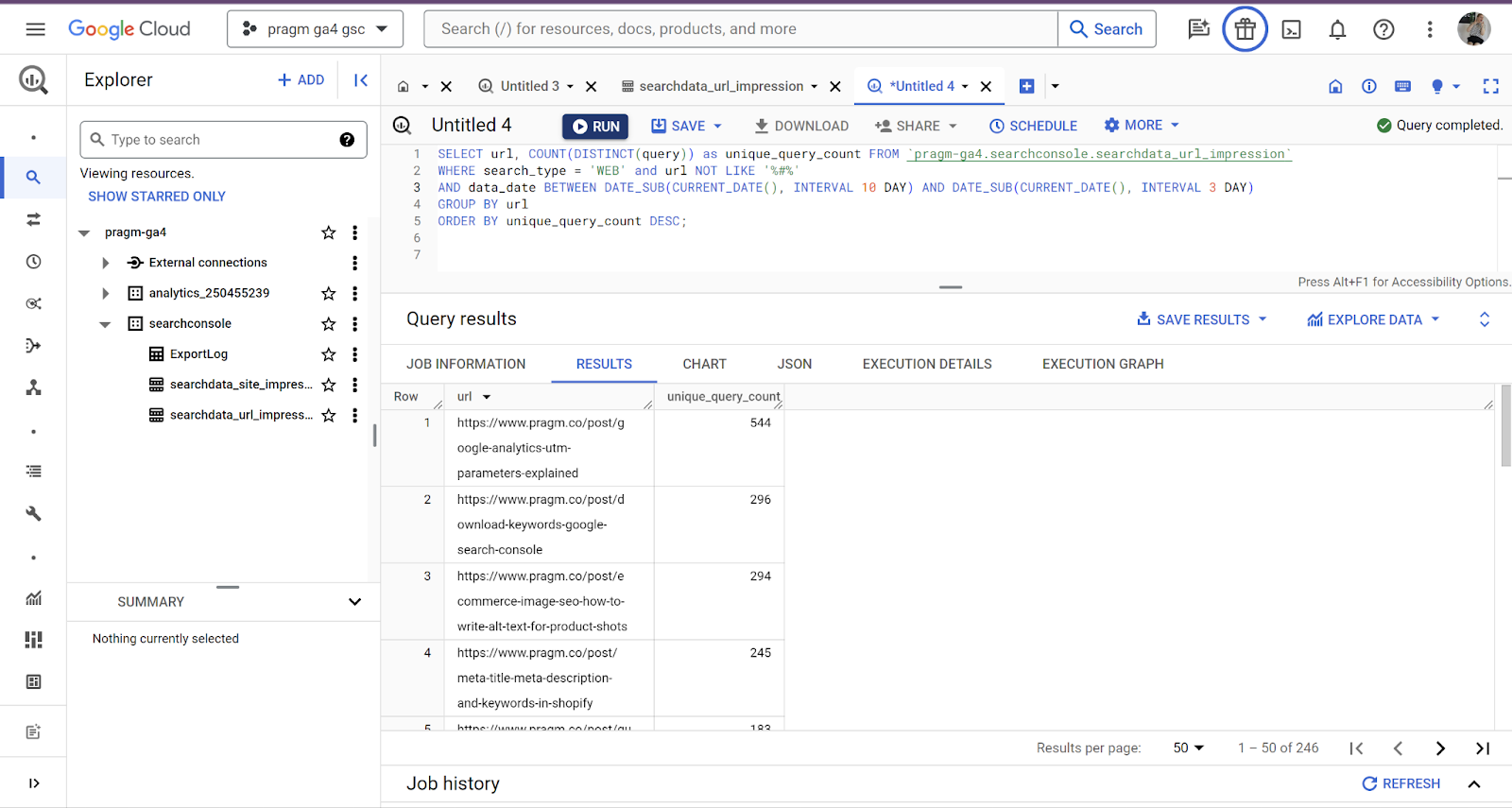

SELECT url, COUNT(DISTINCT(question)) as unique_query_count FROM `pragm-ga4.searchconsole.searchdata_url_impression`

WHERE search_type=”WEB” and url NOT LIKE ‘%#%’

AND data_date BETWEEN DATE_SUB(CURRENT_DATE(), INTERVAL 10 DAY) AND DATE_SUB(CURRENT_DATE(), INTERVAL 3 DAY)

GROUP BY url

ORDER BY unique_query_count DESC;

Screenshot from Google Cloud, February 2024

Screenshot from Google Cloud, February 2024

This time, we won’t break down the question.

This question calculates the Distinctive Question Rely (UQC) per web page by counting the distinct queries related to every URL, excluding URLs containing ‘#’ and filtering for net searches.

It does that for an interval of seven days whereas making an allowance for knowledge is probably not obtainable for the 2 earlier days.

The outcomes are then sorted primarily based on the depend of distinctive queries in descending order, offering insights into which pages appeal to a various vary of search queries.

Use Case #3: Assessing The Content material Danger

This question calculates the share of complete clicks accounted for by the highest 1% of URLs when it comes to clicks. This can be a way more superior question than the earlier ones. It’s taken straight from Marco’s Playbook:

WITH PageClicksRanked AS (

SELECT

url,

SUM(clicks) AS total_clicks,

PERCENT_RANK() OVER (ORDER BY SUM(clicks) DESC) AS percent_rank

FROM

`pragm-ga4.searchconsole.searchdata_url_impression`

WHERE

search_type=”WEB”

AND url NOT LIKE ‘%#%’

GROUP BY

url

)

SELECT

ROUND(SUM(CASE WHEN percent_rank <= 0.01 THEN total_clicks ELSE 0 END) / SUM(total_clicks) * 100, 2) AS percentage_of_clicks

FROM

PageClicksRanked;

This SQL question is extra complicated as a result of it incorporates superior methods like window features, conditional aggregation, and customary desk expressions.

Let’s break it down:

Widespread Desk Expression (CTE) – PageClicksRanked

This a part of the question creates a short lived end result set named PageClicksRanked.

It calculates the overall variety of clicks for every URL and assigns a percentile rank to every URL primarily based on the overall variety of clicks. The percentile rank is calculated utilizing the PERCENT_RANK() window perform, which assigns a relative rank to every row inside a partition of the end result set.

Columns chosen:

url: The URL from which the clicks originated.

SUM(clicks) AS total_clicks: The entire variety of clicks for every URL.

PERCENT_RANK() OVER (ORDER BY SUM(clicks) DESC) AS percent_rank: Calculates the percentile rank for every URL primarily based on the overall variety of clicks, ordered in descending order.

Situations

search_type = ‘WEB’: Filters the info to incorporate solely net search outcomes.

AND url NOT LIKE ‘%#%’: Excludes URLs containing “#” from the end result set.

Grouping

GROUP BY url: Teams the info by URL to calculate the overall clicks for every URL.

Primary Question

This a part of the question calculates the share of complete clicks accounted for by the highest 1% of URLs when it comes to clicks.

It sums up the overall clicks for URLs whose percentile rank is lower than or equal to 0.01 (prime 1%) and divides it by the overall sum of clicks throughout all URLs. Then, it multiplies the end result by 100 to get the share.

Columns chosen

ROUND(SUM(CASE WHEN percent_rank <= 0.01 THEN total_clicks ELSE 0 END) / SUM(total_clicks) * 100, 2) AS percentage_of_clicks: Calculates the share of clicks accounted for by the highest 1% of URLs. The CASE assertion filters out the URLs with a percentile rank lower than or equal to 0.01, after which it sums up the overall clicks for these URLs. Lastly, it divides this sum by the overall sum of clicks throughout all URLs and multiplies it by 100 to get the share. The ROUND perform is used to around the end result to 2 decimal locations.

Supply

FROM PageClicksRanked: Makes use of the PageClicksRanked CTE as the info supply for calculations.

(Narrator voice: for this reason we don’t share extra complicated queries instantly. Writing complicated queries instantly requires information, follow, and understanding of the underlying knowledge and enterprise necessities.)

With a purpose to write such queries, you want:

A strong understanding of SQL syntax: SELECT statements, GROUP BY, mixture features, subqueries and window features to begin.

A deep understanding of the database schema which is why we took the time to undergo them in one other article.

Follow! Writing and optimizing SQL queries does the trick. So does engaged on datasets and fixing analytical issues! Follow means taking an iterative method to experiment, take a look at and refine queries.

Having an excellent cookbook: Setting apart good queries you possibly can tweak and depend on.

Drawback-solving expertise: To seek out the proper method, you have got to have the ability to break down complicated analytical duties into manageable steps. That’s why we began with the five-step framework.

A efficiency mindset: You need to enhance question efficiency, particularly for complicated queries working on massive datasets. When you don’t, you might find yourself spending some huge cash in BigQuery.

4. Create Looker Studio Dashboards

As soon as that is accomplished, you need to use Looker Studio to construct dashboards and visualizations that showcase your content material efficiency metrics.

You’ll be able to customise these dashboards to current knowledge in a significant method for various stakeholders and groups. This implies you aren’t the one one accessing the data.

We’ll dive into this portion of the framework in one other article.

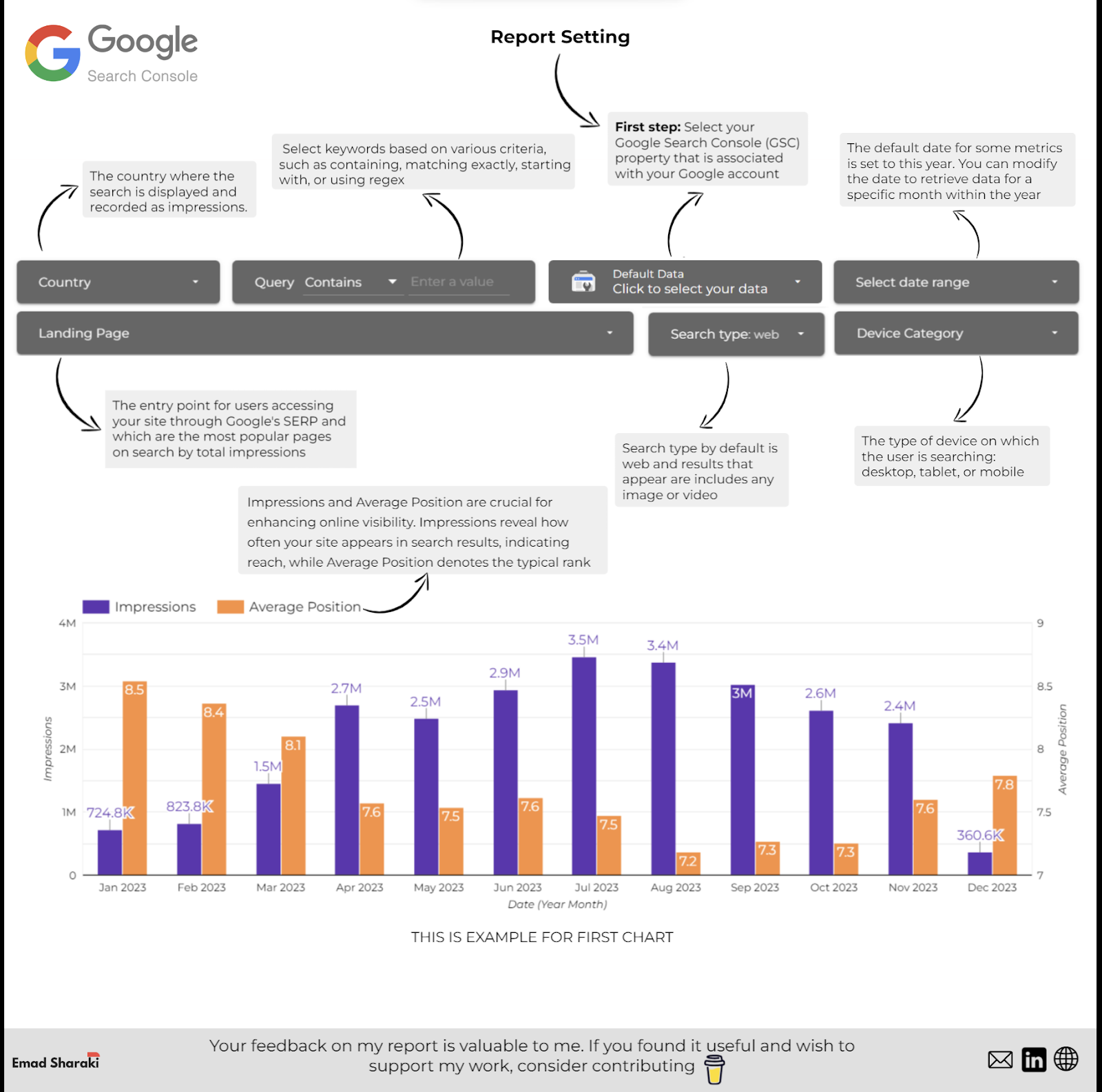

Nevertheless, if you wish to get began with a Looker Studio dashboard utilizing BigQuery knowledge, Emad Sharaki shared his superior dashboard. We suggest you give it a strive.

Picture from Emad Sharaki, February 2024

Picture from Emad Sharaki, February 2024

5. Automate Reporting

After getting accomplished all this, you possibly can arrange scheduled queries in BigQuery to mechanically fetch GSC knowledge current within the tables at common intervals.

This implies you possibly can automate the technology and distribution of reviews inside your organization.

You’ll be able to take a look at the official documentation for this portion for now. We’ll cowl this at a later date in one other devoted article.

The one tip we’ll share right here is that you need to schedule queries after the standard export window to make sure you’re querying the latest obtainable knowledge.

With a purpose to monitor the info freshness, you need to monitor export completion occasions in BigQuery’s export log.

You need to use the reporting automation to allow different groups in relation to content material creation and optimization. Gianna Brachetti-Truskawa, search engine optimisation PM and strategist, helps editorial groups by integrating reviews straight into the CMS.

This implies editors can filter present articles by efficiency and prioritize their optimization efforts accordingly. One other automation reporting aspect to think about is to combine with Jira to attach your efficiency to a dashboard with customized guidelines.

Which means that articles may be pulled to the highest of the backlog and that seasonal matters may be added to the backlog in a well timed method to create momentum.

Going Additional

Clearly, you will want extra use circumstances and a deeper understanding of the kind of content material audit you need to conduct.

Nevertheless, the framework we shared on this article is a good way to make sure issues keep structured. If you wish to take it additional, Lazarina Stoy, search engine optimisation knowledge professional, has a couple of suggestions for you:

“When doing content material efficiency evaluation, it’s vital to grasp that not all content material is created equal. Make the most of SQL Case/When statements to create subsets of the content material primarily based on web page kind (firm web page, weblog submit, case examine, and many others.), content material construction patterns (idea explainer, information merchandise, tutorial, information, and many others), title patterns, goal intent, goal audiences, content material clusters, and another kind of classification that’s distinctive to your content material.

That method you possibly can monitor and troubleshoot in the event you detect patterns which might be underperforming, in addition to amplify the efforts which might be paying off, each time such are detected.”

When you create queries primarily based on these issues, share them with us so we will add them to the cookbook of queries one can use for content material efficiency evaluation!

Conclusion

By following this structured method, you possibly can successfully leverage BigQuery and GSC knowledge to investigate and optimize your content material efficiency whereas automating reporting to maintain stakeholders knowledgeable.

Keep in mind, gathering everybody else’s queries won’t make you an in a single day BigQuery professional. Your worth lies in determining use circumstances.

After that, you possibly can determine the metrics you want and tweak the queries others created or write your individual. After getting that within the bag, it’s time to be knowledgeable by permitting others to make use of the dashboard you created to visualise your findings.

Your peace of thoughts will come when you automate a few of these actions and develop your expertise and queries much more!

Extra assets:

Featured Picture: Suvit Topaiboon/Shutterstock

[ad_2]

Supply hyperlink