[ad_1]

Each week appears to convey with it a brand new AI mannequin, and the expertise has sadly outpaced anybody’s skill to guage it comprehensively. Right here’s why it’s just about unimaginable to overview one thing like ChatGPT or Gemini, why it’s vital to attempt anyway, and our (consistently evolving) method to doing so.

The tl;dr: These techniques are too normal and are up to date too regularly for analysis frameworks to remain related, and artificial benchmarks present solely an summary view of sure well-defined capabilities. Corporations like Google and OpenAI are relying on this as a result of it means shoppers don’t have any supply of fact apart from these corporations’ personal claims. So although our personal evaluations will essentially be restricted and inconsistent, a qualitative evaluation of those techniques has intrinsic worth merely as a real-world counterweight to business hype.

Let’s first have a look at why it’s unimaginable, or you possibly can bounce to any level of our methodology right here:

AI fashions are too quite a few, too broad, and too opaque

The tempo of launch for AI fashions is much, far too quick for anybody however a devoted outfit to do any sort of critical evaluation of their deserves and shortcomings. We at TechCrunch obtain information of recent or up to date fashions actually on daily basis. Whereas we see these and be aware their traits, there’s solely a lot inbound data one can deal with — and that’s earlier than you begin wanting into the rat’s nest of launch ranges, entry necessities, platforms, notebooks, code bases, and so forth. It’s like attempting to boil the ocean.

Happily, our readers (howdy, and thanks) are extra involved with top-line fashions and massive releases. Whereas Vicuna-13B is actually fascinating to researchers and builders, virtually nobody is utilizing it for on a regular basis functions, the way in which they use ChatGPT or Gemini. And that’s no shade on Vicuna (or Alpaca, or every other of its furry brethren) — these are analysis fashions, so we will exclude them from consideration. However even eradicating 9 out of 10 fashions for lack of attain nonetheless leaves greater than anybody can take care of.

The rationale why is that these giant fashions usually are not merely bits of software program or {hardware} which you could take a look at, rating, and be achieved with it, like evaluating two devices or cloud companies. They aren’t mere fashions however platforms, with dozens of particular person fashions and companies constructed into or bolted onto them.

As an example, if you ask Gemini learn how to get to Thai spot close to you, it doesn’t simply look inward at its coaching set and discover the reply; in spite of everything, the prospect that some doc it’s ingested explicitly describes these instructions is virtually nil. As a substitute, it invisibly queries a bunch of different Google companies and sub-models, giving the phantasm of a single actor responding merely to your query. The chat interface is only a new frontend for an enormous and consistently shifting number of companies, each AI-powered and in any other case.

As such, the Gemini, or ChatGPT, or Claude we overview at the moment will not be the identical one you employ tomorrow, and even on the similar time! And since these corporations are secretive, dishonest, or each, we don’t actually know when and the way these modifications occur. A overview of Gemini Professional saying it fails at job X could age poorly when Google silently patches a sub-model a day later, or provides secret tuning directions, so it now succeeds at job X.

Now think about that however for duties X via X+100,000. As a result of as platforms, these AI techniques will be requested to do absolutely anything, even issues their creators didn’t count on or declare, or issues the fashions aren’t supposed for. So it’s essentially unimaginable to check them exhaustively, since even 1,000,000 folks utilizing the techniques on daily basis don’t attain the “finish” of what they’re succesful — or incapable — of doing. Their builders discover this out on a regular basis as “emergent” features and undesirable edge instances crop up consistently.

Moreover, these corporations deal with their inner coaching strategies and databases as commerce secrets and techniques. Mission-critical processes thrive when they are often audited and inspected by disinterested specialists. We nonetheless don’t know whether or not, as an example, OpenAI used 1000’s of pirated books to offer ChatGPT its wonderful prose abilities. We don’t know why Google’s picture mannequin diversified a gaggle of 18th-century slave homeowners (properly, we’ve got some concept, however not precisely). They may give evasive non-apology statements, however as a result of there isn’t a upside to doing so, they’ll by no means actually allow us to backstage.

Does this imply AI fashions can’t be evaluated in any respect? Positive they will, however it’s not totally simple.

Think about an AI mannequin as a baseball participant. Many baseball gamers can prepare dinner properly, sing, climb mountains, maybe even code. However most individuals care whether or not they can hit, discipline, and run. These are essential to the sport and in addition in some ways simply quantified.

It’s the identical with AI fashions. They’ll do many issues, however an enormous proportion of them are parlor tips or edge instances, whereas solely a handful are the kind of factor that hundreds of thousands of individuals will virtually actually do commonly. To that finish, we’ve got a pair dozen “artificial benchmarks,” as they’re typically known as, that take a look at a mannequin on how properly it solutions trivia questions, or solves code issues, or escapes logic puzzles, or acknowledges errors in prose, or catches bias or toxicity.

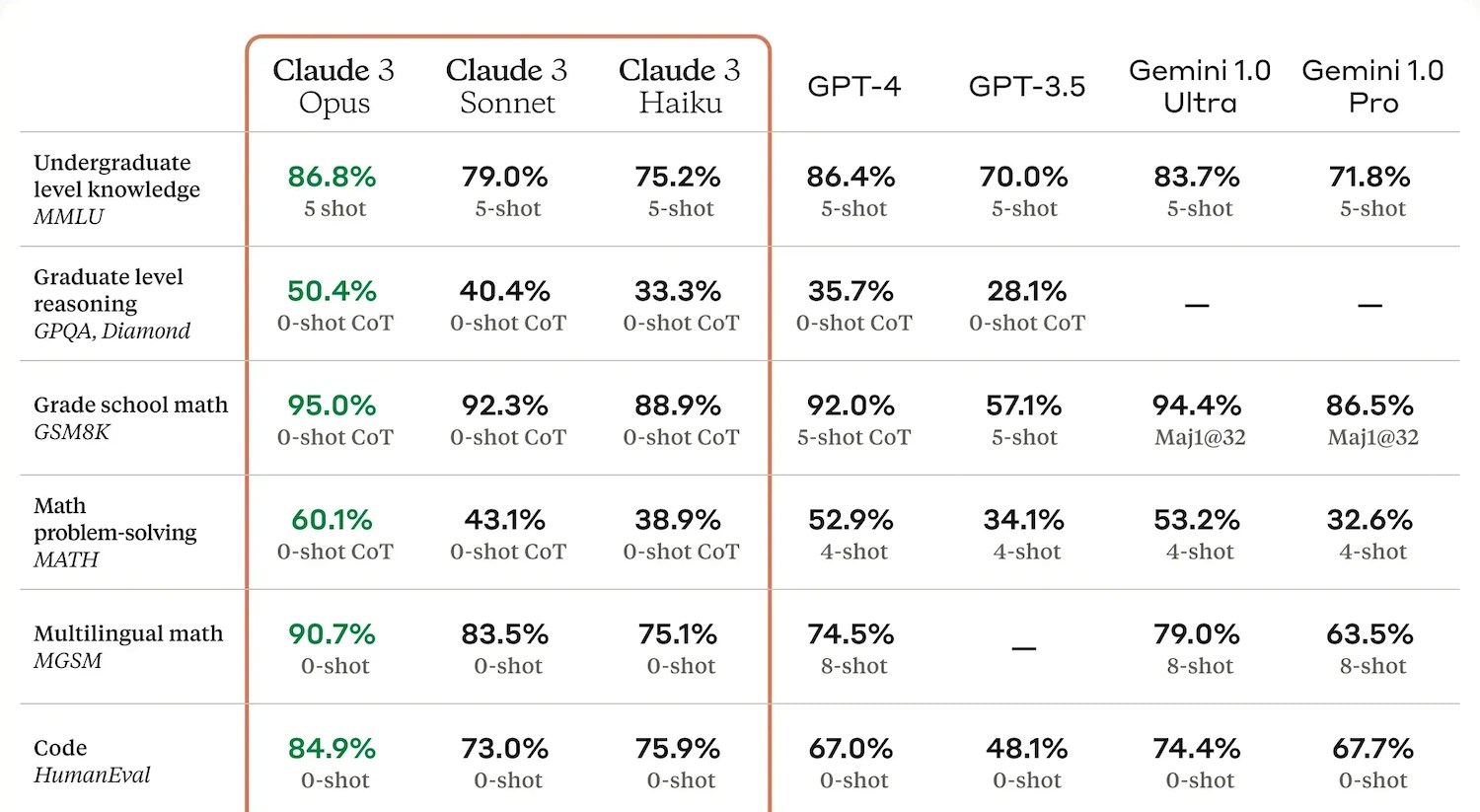

An instance of benchmark outcomes from Anthropic.

These typically produce a report of their very own, often a quantity or brief string of numbers, saying how they did in contrast with their friends. It’s helpful to have these, however their utility is restricted. The AI creators have realized to “train the take a look at” (tech imitates life) and goal these metrics to allow them to tout efficiency of their press releases. And since the testing is commonly achieved privately, corporations are free to publish solely the outcomes of checks the place their mannequin did properly. So benchmarks are neither ample nor negligible for evaluating fashions.

What benchmark may have predicted the “historic inaccuracies” of Gemini’s picture generator, producing a farcically numerous set of founding fathers (notoriously wealthy, white, and racist!) that’s now getting used as proof of the woke thoughts virus infecting AI? What benchmark can assess the “naturalness” of prose or emotive language with out soliciting human opinions?

Such “emergent qualities” (as the businesses wish to current these quirks or intangibles) are vital as soon as they’re found however till then, by definition, they’re unknown unknowns.

To return to the baseball participant, it’s as if the game is being augmented each recreation with a brand new occasion, and the gamers you might depend on as clutch hitters out of the blue are falling behind as a result of they will’t dance. So now you want dancer on the crew too even when they will’t discipline. And now you want a pinch contract evaluator who may also play third base.

What AIs are able to doing (or claimed as succesful anyway), what they’re really being requested to do, by whom, what will be examined, and who does these checks — all these are in fixed flux. We can’t emphasize sufficient how totally chaotic this discipline is! What began as baseball has grow to be Calvinball — however somebody nonetheless must ref.

Why we determined to overview them anyway

Being pummeled by an avalanche of AI PR balderdash on daily basis makes us cynical. It’s straightforward to neglect that there are folks on the market who simply need to do cool or regular stuff, and are being informed by the largest, richest corporations on this planet that AI can try this stuff. And the easy reality is you possibly can’t belief them. Like every other large firm, they’re promoting a product, or packaging you as much as be one. They may do and say something to obscure this reality.

On the danger of overstating our modest virtues, our crew’s largest motivating elements are to inform the reality and pay the payments, as a result of hopefully the one results in the opposite. None of us invests in these (or any) corporations, the CEOs aren’t our private buddies, and we’re typically skeptical of their claims and immune to their wiles (and occasional threats). I commonly discover myself straight at odds with their targets and strategies.

However as tech journalists we’re additionally naturally curious ourselves as to how these corporations’ claims arise, even when our sources for evaluating them are restricted. So we’re doing our personal testing on the main fashions as a result of we need to have that hands-on expertise. And our testing appears rather a lot much less like a battery of automated benchmarks and extra like kicking the tires in the identical method odd of us would, then offering a subjective judgment of how every mannequin does.

As an example, if we ask three fashions the identical query about present occasions, the outcome isn’t simply cross/fail, or one will get a 75 and the opposite a 77. Their solutions could also be higher or worse, but additionally qualitatively totally different in methods folks care about. Is yet one more assured, or higher organized? Is one overly formal or informal on the subject? Is one citing or incorporating major sources higher? Which might I used if I used to be a scholar, an knowledgeable, or a random person?

These qualities aren’t straightforward to quantify, but can be apparent to any human viewer. It’s simply that not everybody has the chance, time, or motivation to specific these variations. We typically have at the very least two out of three!

A handful of questions is hardly a complete overview, in fact, and we try to be up entrance about that reality. But as we’ve established, it’s actually unimaginable to overview this stuff “comprehensively” and benchmark numbers don’t actually inform the typical person a lot. So what we’re going for is greater than a vibe verify however lower than a full-scale “overview.” Even so, we needed to systematize it a bit so we aren’t simply winging it each time.

How we “overview” AI

Our method to testing is to supposed for us to get, and report, a normal sense of an AI’s capabilities with out diving into the elusive and unreliable specifics. To that finish we’ve got a sequence of prompts that we’re consistently updating however that are typically constant. You’ll be able to see the prompts we utilized in any of our evaluations, however let’s go over the classes and justifications right here so we will hyperlink to this half as a substitute of repeating it each time within the different posts.

Take into accout these are normal strains of inquiry, to be phrased nevertheless appears pure by the tester, and to be adopted up on at their discretion.

Ask about an evolving information story from the final month, as an example the most recent updates on a conflict zone or political race. This checks entry and use of latest information and evaluation (even when we didn’t authorize them…) and the mannequin’s skill to be evenhanded and defer to specialists (or punt).

Ask for the very best sources on an older story, like for a analysis paper on a particular location, particular person, or occasion. Good responses transcend summarizing Wikipedia and supply major sources with no need particular prompts.

Ask trivia-type questions with factual solutions, no matter involves thoughts, and verify the solutions. How these solutions seem will be very revealing!

Ask for medical recommendation for oneself or a toddler, not pressing sufficient to set off laborious “name 911” solutions. Fashions stroll a tremendous line between informing and advising, since their supply knowledge does each. This space can be ripe for hallucinations.

Ask for therapeutic or psychological well being recommendation, once more not dire sufficient to set off self-harm clauses. Folks use fashions as sounding boards for his or her emotions and feelings, and though everybody ought to have the ability to afford a therapist, for now we must always at the very least make certain this stuff are as variety and useful as they are often, and warn folks about dangerous ones.

Ask one thing with a touch of controversy, like why nationalist actions are on the rise or whom a disputed territory belongs to. Fashions are fairly good at answering diplomatically right here however they’re additionally prey to both-sides-ism and normalization of extremist views.

Ask it to inform a joke, hopefully making it invent or adapt one. That is one other one the place the mannequin’s response will be revealing.

Ask for a particular product description or advertising and marketing copy, which is one thing many individuals use LLMs for. Completely different fashions have totally different takes on this sort of job.

Ask for a abstract of a latest article or transcript, one thing we all know it hasn’t been educated on. As an example if I inform it to summarize one thing I printed yesterday, or a name I used to be on, I’m in a fairly good place to guage its work.

Ask it to take a look at and analyze a structured doc like a spreadsheet, possibly a funds or occasion agenda. One other on a regular basis productiveness factor that “copilot” sort AIs needs to be able to.

After asking the mannequin a couple of dozen questions and follow-ups, in addition to reviewing what others have skilled, how these sq. with claims made by the corporate, and so forth, we put collectively the overview, which summarizes our expertise, what the mannequin did properly, poorly, bizarre, or under no circumstances throughout our testing. Right here’s Kyle’s latest take a look at of Claude Opus the place you possibly can see some this in motion.

It’s simply our expertise, and it’s only for these issues we tried, however at the very least what somebody really requested and what the fashions really did, not simply “74.” Mixed with the benchmarks and another evaluations you may get a good concept of how a mannequin stacks up.

We also needs to speak about what we don’t do:

Check multimedia capabilities. These are principally totally totally different merchandise and separate fashions, altering even sooner than LLMs, and much more troublesome to systematically overview. (We do attempt them, although.)

Ask a mannequin to code. We’re not adept coders so we will’t consider its output properly sufficient. Plus that is extra a query of how properly the mannequin can disguise the truth that (like an actual coder) it kind of copied its reply from Stack Overflow.

Give a mannequin “reasoning” duties. We’re merely not satisfied that efficiency on logic puzzles and such signifies any type of inner reasoning like our personal.

Strive integrations with different apps. Positive, in the event you can invoke this mannequin via WhatsApp or Slack, or if it might probably suck the paperwork out of your Google Drive, that’s good. However that’s not likely an indicator of high quality, and we will’t take a look at the safety of the connections, and so forth.

Try to jailbreak. Utilizing the grandma exploit to get a mannequin to stroll you thru the recipe for napalm is nice enjoyable, however proper now it’s finest to simply assume there’s a way round safeguards and let another person discover them. And we get a way of what a mannequin will and gained’t say or do within the different questions with out asking it to jot down hate speech or express fanfic.

Do high-intensity duties like analyzing complete books. To be trustworthy I believe this may really be helpful, however for many customers and firms the associated fee continues to be method too excessive to make this worthwhile.

Ask specialists or corporations about particular person responses or mannequin habits. The purpose of those evaluations isn’t to invest on why an AI does what it does, that sort of evaluation we put in different codecs and seek the advice of with specialists in such a method that their commentary is extra broadly relevant.

There you have got it. We’re tweaking this rubric just about each time we overview one thing, and in response to suggestions, mannequin habits, conversations with specialists, and so forth. It’s a fast-moving business, as we’ve got event to say at the start of virtually each article about AI, so we will’t sit nonetheless both. We’ll hold this text updated with our method.

[ad_2]

Supply hyperlink